How the Internet Broke Everyone’s Bullshit Detectors: The AI and Data Reality

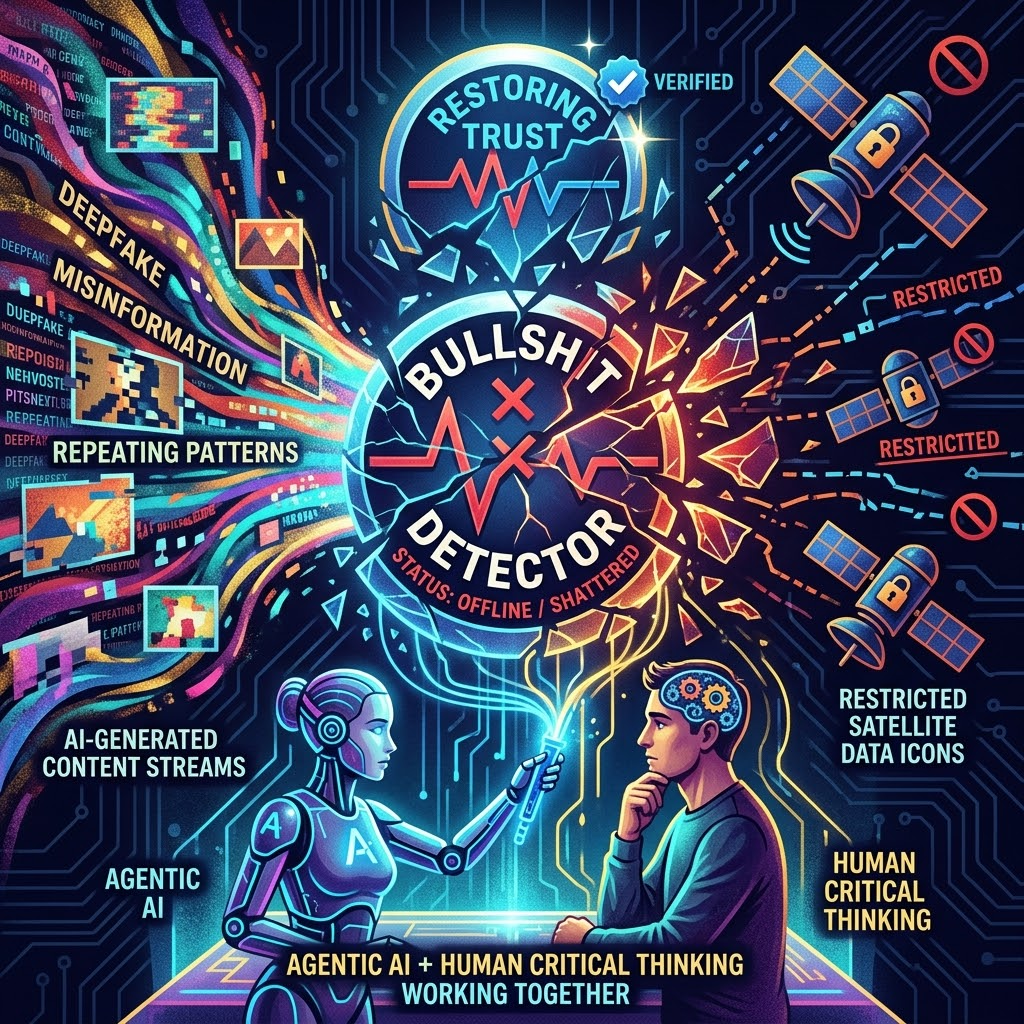

The internet broke everyone’s bullshit detectors, and the evidence is overwhelming. Synthetic media now floods every platform, satellite imagery access faces new restrictions, and the tools we once trusted to separate truth from fiction are failing at an unprecedented scale. The problem isn’t just that misinformation exists—it’s that our collective ability to identify it has collapsed under the weight of technological disruption.

AI-generated content has become virtually indistinguishable from human-created material, eroding the foundation of digital trust. Text, images, video, and audio can now be fabricated with tools accessible to anyone with an internet connection. Deepfakes have evolved from novelty to weapon, deployed in contexts ranging from political manipulation to financial fraud. When every piece of content carries the potential to be synthetic, skepticism becomes the default mode, and exhaustion follows close behind.

The scale of this content explosion overwhelms traditional fact-checking mechanisms. Human moderators and legacy verification systems cannot keep pace with the volume of material requiring review. Social platforms struggle to label synthetic content consistently, and even when labels appear, users often ignore them or distrust the labeling authority itself. The result is a digital ecosystem where authenticity has become nearly impossible to verify at speed.

Geospatial data restrictions compound the verification crisis in unexpected ways. Satellite and drone imagery once provided an independent layer of truth, offering visual confirmation that could cut through narrative disputes. Now, access to high-resolution geospatial data faces tightening controls due to national security concerns, privacy regulations, and commercial gatekeeping. When fact-checkers cannot independently verify claims about physical events or environmental changes through satellite imagery and geospatial verification tools, another critical verification pathway closes.

These restrictions create information asymmetries that favor powerful institutions while leaving journalists, researchers, and citizens without the tools to challenge official narratives. Events occurring in remote regions or contested territories become harder to document independently. The gap between what governments and corporations can see versus what the public can verify widens, feeding conspiracy theories and undermining institutional credibility.

Agentic AI verification models represent a potential counterweight to synthetic content proliferation. These autonomous systems can analyze content at machine speed, cross-reference claims against vast databases, and identify inconsistencies that human reviewers might miss. Unlike passive AI tools that wait for human prompts, agentic models operate independently to flag suspicious content, trace information provenance, and assess credibility scores based on multiple signals.

The technology behind these verification agents draws on advances in AI-driven search technology, enabling real-time fact-checking across multiple sources. Early implementations show promise in detecting coordinated inauthentic behavior, identifying manipulated media, and mapping misinformation networks. However, these systems face their own credibility challenges—who trains them, what biases they encode, and whether they can be trusted to make consequential judgments about truth.

The opacity of AI verification models raises fundamental questions about accountability. When an autonomous system flags content as false or suspicious, users need transparency about the reasoning behind that determination. Without explainability, AI verification risks becoming another black box that users either blindly trust or reflexively reject. The irony of using AI to combat AI-generated misinformation is not lost on skeptics who see an arms race with no clear winners.

Human digital literacy remains the essential complement to technological solutions. No verification system, however sophisticated, can function effectively if users lack the skills to interpret its outputs critically. Digital literacy programs must evolve beyond basic media literacy to address the specific challenges of synthetic content, algorithmic curation, and AI-powered search platforms reshaping information access.

Skepticism fatigue presents a particularly dangerous dimension of the broken detector phenomenon. When everything requires verification and verification itself becomes exhausting, many users simply disengage or retreat into trusted echo chambers. This withdrawal from critical evaluation makes populations more vulnerable to manipulation, not less. Effective digital literacy must teach not just how to verify information, but how to manage cognitive load and maintain engagement without burnout.

Ethical AI development practices intersect directly with verification credibility. Systems trained on biased datasets or designed without diverse input will reproduce and amplify existing prejudices in their truth assessments. The disruption AI software brings to existing systems includes the potential to embed verification biases at scale, making them harder to detect and correct than human biases. Transparent development processes, diverse training data, and ongoing audits become essential safeguards.

Collaborative strategies offer the most realistic path toward restoring functional bullshit detectors. Platform companies, fact-checking organizations, academic institutions, and civil society groups must coordinate rather than compete. Shared databases of known synthetic content, standardized labeling protocols, and open-source verification tools can create network effects that individual actors cannot achieve alone. Cross-sector collaboration also distributes the trust burden, reducing dependence on any single gatekeeper.

These partnerships face significant obstacles, including competitive incentives, jurisdictional conflicts, and ideological disagreements about what constitutes misinformation. Despite these challenges, pilot programs demonstrate that coordinated responses can identify and contain viral falsehoods faster than siloed efforts. The key is designing systems that preserve editorial independence while enabling technical interoperability. Verification networks must resist both centralized control and fragmentation into incompatible fiefdoms.

Workforce implications add another layer of urgency to the verification crisis. As organizations recognize the scale of the challenge, demand grows for professionals skilled in AI auditing, synthetic media detection, and digital forensics. The emergence of AI agents in search and content curation reshapes entire industries, requiring rapid workforce adaptation. Educational institutions struggle to develop curricula for roles that didn’t exist five years ago, while professionals face pressure to acquire verification skills alongside domain expertise.

The economic dimension cannot be ignored. Misinformation carries measurable costs through market manipulation, fraudulent schemes, and reputational damage. Businesses increasingly recognize that trust is infrastructure, not marketing. Investment in verification capabilities becomes a competitive necessity rather than a compliance checkbox. This shift drives innovation but also raises concerns about verification becoming a luxury good available only to well-resourced organizations, while smaller entities and individual users remain vulnerable.

Regulatory frameworks lag dangerously behind technological reality. Policymakers debate liability standards, transparency requirements, and content moderation obligations while the landscape they’re attempting to regulate transforms beneath them. Effective regulation must balance platform accountability with free expression protections, incentivize good-faith verification efforts, and impose consequences for negligent or malicious behavior. The challenge is crafting rules flexible enough to accommodate rapid innovation while establishing clear boundaries against harmful practices.

Restoring functional bullshit detectors requires integrated technological and human oversight working in concert. No single solution—whether agentic AI, digital literacy programs, or platform policy changes—can address the multifaceted nature of the crisis alone. The path forward demands sustained investment in verification infrastructure, commitment to transparency and accountability, and recognition that trust is a public good requiring collective maintenance. The internet may have broken our detectors, but the tools to rebuild them exist if we can summon the coordination and will to deploy them effectively.